After installing and configuring all nodes, start the seed node. Verify that it is operational by issuing the command "nodetool status". Once the seed node is operational, you can proceed to start the other nodes in the cluster. You must allow the node to join the cluster before proceeding to the next node. After all nodes have started, the "nodetool status" command will display results as shown below.

|

seed-nodes = ["akka.tcp://twcloud@${seed-node.ip}:2552"] |

Replace ${seed-node.ip}with the IP addresses of the seed nodes, so it should look similar to the following:

seed-nodes = ["akka.tcp://twcloud@10.1.1.111:2552","akka.tcp://twcloud@10.1.1.112:2552","akka.tcp://twcloud@10.1.1.113:2552"] |

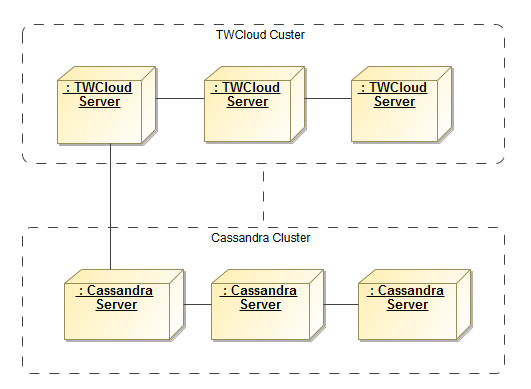

2. Cassandra configuration parameters

- esi.persistence.cassandra.connection.seeds

The value of this parameter is a list of Cassandra node IP addresses. You can find this parameter in application.conf by looking for the following.

# List of comma delimited host. # Setting the value as localhost will be resolved from InetAddress.getLocalHost().getHostAddress() # Ex. seeds = ["10.1.1.123", "10.1.1.124", "10.1.1.125"] |

- seeds = ["localhost"]

As you can see, the default value is [“localhost”], which is only suitable for a single node server where both Teamwork Cloud and Cassandra are deployed on the same machine. According to our sample environment, you should change it to the following.

seeds = ["10.1.1.101", "10.1.1.102", "10.1.1.103"] |

- esi.persistence.cassandra.keyspace.replication-factor

This parameter defines the Cassandra replication factor for the “esi” keyspace used by Teamwork Cloud. The replication factor describes how many copies of your data will be written by Cassandra. For example, replication factor 2 means your data will be written to 2 nodes.

For a three-node cluster, if you would like the cluster to be able to survive 1 node loss, you will need to set the replication factor to 3.

persistence {

cassandra {

keyspace {

replication-factor = 3

} |

Please note that this configuration will be used only for the first time Teamwork Cloud connects to Cassandra and creates a new esi keyspace. Changing the replication factor after the keyspace has already been created is rather a complex task. Read this document if you need to change it.

Teamwork Cloud uses QUORUM for both write and read consistency levels. |

Click here for a detailed explanation of data consistency.

To start up the Teamwork Cloud cluster, start the server on the seed machine and wait until you see a message similar to the following in the server.log.

INFO 2017-02-15 10:57:08.409 TWCloud Cluster with 1 node(s) : [10.1.1.111] [com.nomagic.esi.server.core.actor.ClusterHealthActor, twcloud-esi.actor.other-dispatcher-31] |

Then you can start the server on the remaining machines. You should see the following messages in the server.log which shows all 3 nodes are forming the cluster.

INFO 2017-02-15 10:58:23.956 TWCloud Cluster with 2 node(s) : [10.1.1.111, 10.1.1.112] [com.nomagic.esi.server.core.actor.ClusterHealthActor, twcloud-esi.actor.other-dispatcher-18] INFO 2017-02-15 10:58:25.963 TWCloud Cluster with 3 node(s) : [10.1.1.111, 10.1.1.112, 10.1.1.113] [com.nomagic.esi.server.core.actor .ClusterHealthActor, twcloud-esi.actor.other-dispatcher-18] |

Related pages |